Introduction

Yesterday, Deepseek released the “Janus-Pro”, which is a multimodal embedding and text-to-image generation model. The abstract reminded me of a talk I gave in 2016, where I mentioned that “data, compute and algorithm” are the three driving forces of AI. This was a common understanding at that time. The tech report starts with “In this work, we introduce Janus-Pro, an advanced version of the previous work Janus. Specifically, Janus-Pro incorporates, (1) an optimised training strategy, (2) expanded training data, and (3) scaling to larger model size”.

The three driving forces still remain unbeaten and play crucial roles in AI advancement.

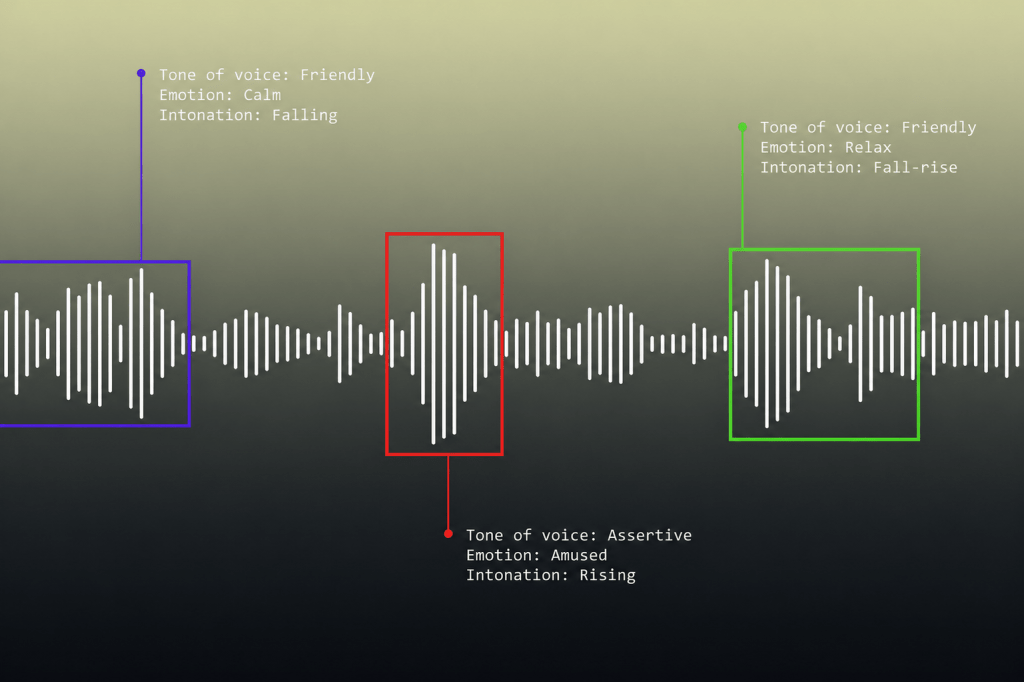

1. Two levels of captions

Consider this image and corresponding captions:

Caption 1: Skyscrapers in which businesses lead the country’s economy.

Caption 2: This image displays four black skyscrapers that are made of glass and steel. They are reflecting the light from the sky. The sky is light blue and there are some clouds in the sky. There is a building in the foreground. The building is also made of glass and steel and is reflecting the light from the sky. The style of the image is realistic.

The first caption is typical that we would find on the internet for images like this. It captures the high-level semantic information in the image. Where as the second caption is a more detailed, fine grained description of the image. The image and captions are from the dataset pixel to prose. Understanding an image in terms of fine-grained local features and globally consistent semantic features is not new. Progressive GAN demonstrated that different layers of the network captured global and local features. Style GAN used it to modify the global or local features of a generated face.

2. Decoupling encoders

For multimodal understanding, it is necessary to have the image and corresponding captions in the same embedding space. CLIP introduced image and text encoders that were jointly trained using contrastive techniques. The embeddings generated by these encoders captured the semantic meaning of the image, thus forcing a shared embedding space. LLaVA introduced a bridge layer to map the embeddings from a Vision Transformer into the input tokens for LLaMA. As models evolved for the purposes of multimodal understanding and generation tasks, it was common practice to use a unified encoder module to obtain the image embeddings.

The key observation in Janus was that the representation required for the multimodal understanding (semantic) differs greatly from the representation required for a generation task (perceptual). It introduced two independent visual encoding pathways, one for multimodal understanding and one for generation tasks.

3. Why is it important

By decoupling the multimodal understanding encoder and visual encoder for generative tasks, Janus has given us a framework to jointly obtain sematic and perceptual embeddings. Most “multimodal” models focus on image and text modalities. However, the real world has audio, video and many forms of structured/unstructured data. Separate encoding and adapter heads could be added to this architecture to obtain joint embeddings of all these different modalities, moving towards truly “multimodal” models.

Conclusion

The release of Janus-Pro underscores the ongoing advancements in multimodal AI, emphasizing the importance of semantic and perceptual embeddings in enhancing both understanding and generation tasks. By introducing decoupled encoding pathways, Janus-Pro optimizes multimodal representation, ensuring that semantic comprehension remains distinct from perceptual synthesis. This architectural shift sets a precedent for integrating multi-modalities beyond text and images, including audio, video, and structured data, into a unified AI framework.

As AI models continue to evolve, the ability to jointly leverage distinct representations will become essential for tasks requiring both high-level comprehension and fine-grained detail generation. Future advancements may focus on refining adapter modules, improving cross-modal learning, and expanding embedding spaces to accommodate diverse data streams. With these developments, the pursuit of truly generalized multimodal AI moves one step closer to reality.